Not so long ago we began to think about the question of analyzing program code not only from the viewpoint of presence of 64-bit or OpenMP errors in it but from the viewpoint of its complexity for adaptation for 64-bit and parallel systems as well. And we would like to tell you about our first practical experiments in this sphere.

The reason to think about calculation of metrics was a question asked by one of our clients - how to grossly estimate the complexity of porting a project on a 64-bit system. As this question may be relevant for many of our potential clients, a tool for calculating metrics can provide great help here.

Now we are uniting our two tools Viva64 and VivaMP into a single software product for developers - PVS-Studio. Within the framework of this product it will be natural to implement a new function to prognose the complexity of a software product and also to estimate the time needed for adapting it for parallel or 64-bit systems.

Although there are many various metrics, after reviewing them we did not manage to find the methods to estimate their complexity by the criteria we needed. Perhaps, our search was not thorough enough but may be there are actually no such metrics. That's why we implemented our own calculation metrics which obviously need further improvement and now we can only tell you about intermediate results achieved in the experimental program VivaShowMetrics.

VivaShowMetrics program operates with preprocessed files (*.i) and builds graphs by five metrics at the output:

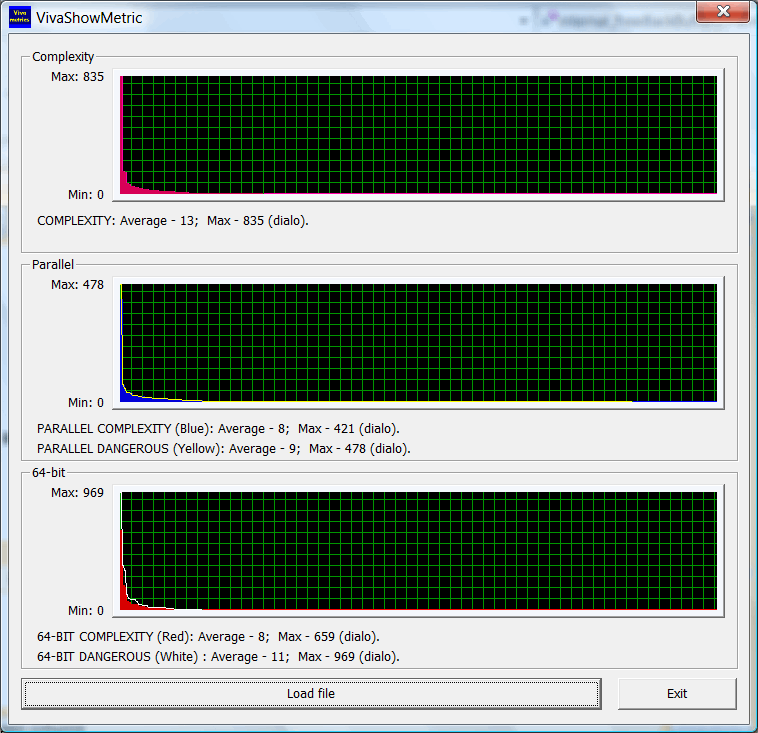

For convenient examination the collected metrics on each function are sorted in a descending order. As a result, for the library CImg (C++ Template Image Processing Toolkit) you can see rather a typical situation:

Functions which are unsafe and complex from the viewpoint of paralleling and 64-bit compose a small part of the general number of functions. All the rest functions are rather simple and for the most part are an interface for the user's code.

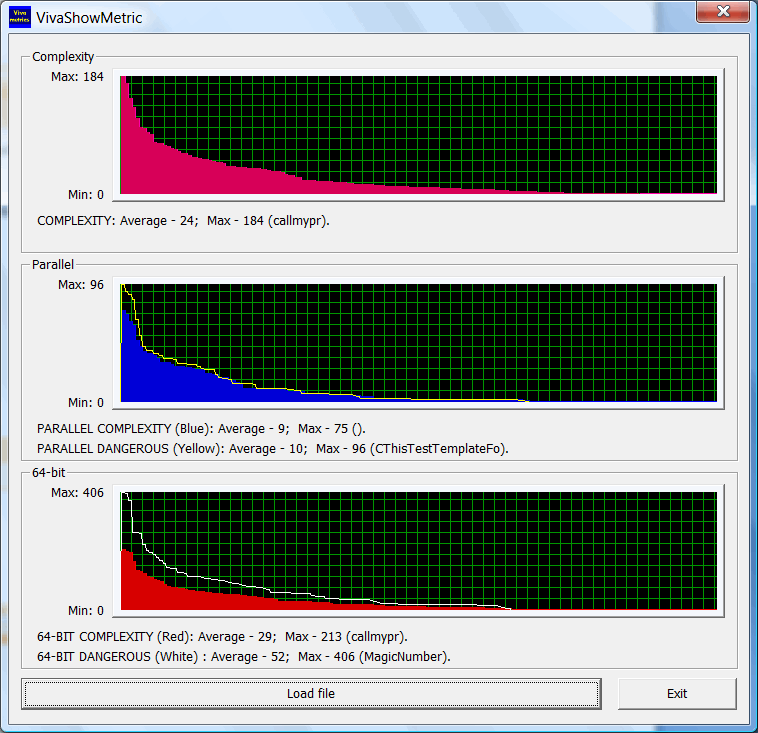

But if you load the file relating to the system of Viva64 analyzer's unit-tests you will see quite a different situation:

There are many functions on which the analyzer must show messages relating to 64-bit errors. Although the functions are small, still they are rather complex and unsafe in every way. It is because of this that the percent of unsafe functions is rather large. And the graph of 64-bit complexity advances greatly the graph of porting complexity. It is explained by that small functions in the code contain a large amount of errors. The functions are unsafe but they are easy to correct.

0